If you buy "AuI ConverteR PROduce-RD" (2023/12.x version) from 24 August 2023 to 24 October 2023, you will get free update to version 2024 (13.x) after its release.

Audio Basis - articles about audio

Bit depth is value that defines a number of discrete levels of digital-signal sample. We should distinguish audio and video bit depth. Keep reading for details...

Bit-depth audio

Sound is an oscillations of air pressure. The intensity of the pressure to ear is changed in time.

The stronger the pressure changes, the louder the sound.

We have an analog signal as air pressure. The analog signal is continuous.

At each instant the intensity has some value.

Back to top

Converting of analog signal to digital format

Measurements of the instant intensity value is stored into computer memory.

Over equal periods, such measuremnents are performed. And the sequence of these measurements into the memory is a digital signal.

Back to topWhat is bit depth formula?

The measurement is called "sample". Sample has minimum and maximum values.

The difference between the minimum and maximum is divided into equal shares. Suppose we have N number of the shares.

N is called "quantization level number". It's integer value.

Share = Level quant

In computers, numbers are stored in a memory cell.

The cell contains the number in binary form. It's several bits. Bit is zero or one.

The memory cell has fixed length in bits.

To store the sample in the computer memory the best way, it's suitable:

N = 2m, where,

m is a bit depth or a length of the memory cell in bits.

Bit depth audio point to quantization level number.

You can look at example below.

However, bit depth may point to integer or floating point numbers.

Integer bit depth

Integer bit depth consists some number bits: 8, 12, 16, 20, 24, 32, 64.

Bit is 0 or 1. Computer memory allows saving number s from -128 to 127 via 8 bits.

One bit is used for sign (plus or minus).

The rest seven bits are used for number:

2 = 0000 0010

1 = 0000 0001

0 = 0000 0000

-1 = 1111 1111

-2 = 1111 1110

127 and -128 is relative numbers that point to maximum signal level for 8 bit resolution.

More number of bits causes lesser noise level. The noise defines precision of digital signal.

Float point bit depth

Floating point bit depth has range 0.0 ... 1.0

1.0 is highest level of air pressure.

Here is no exact borders between levels. I.e. floating point bit depth provides maximum measurement precision comparing integer ones.

However, it's not applicable for analog-to-digital and digital-to-analog converters.

Float point numbers are stored in computer memory. Standard memory cell for audio applications for float point number may contains 32 or 64 bit.

Back to topDoes bit depth affect sound quality?

Bid depth defines quantization noise level. Higher bit depth means lower noise.

There is popular opinion, that 16 bits is enough for highest sound quality.

However, some details are there.

Bit depth in simple words

We can imagine music as a puzzle picture.

Bit depth is like the size of the puzzle element.

Higher bit depth it's like smaller elements.

Read more about bit depth...

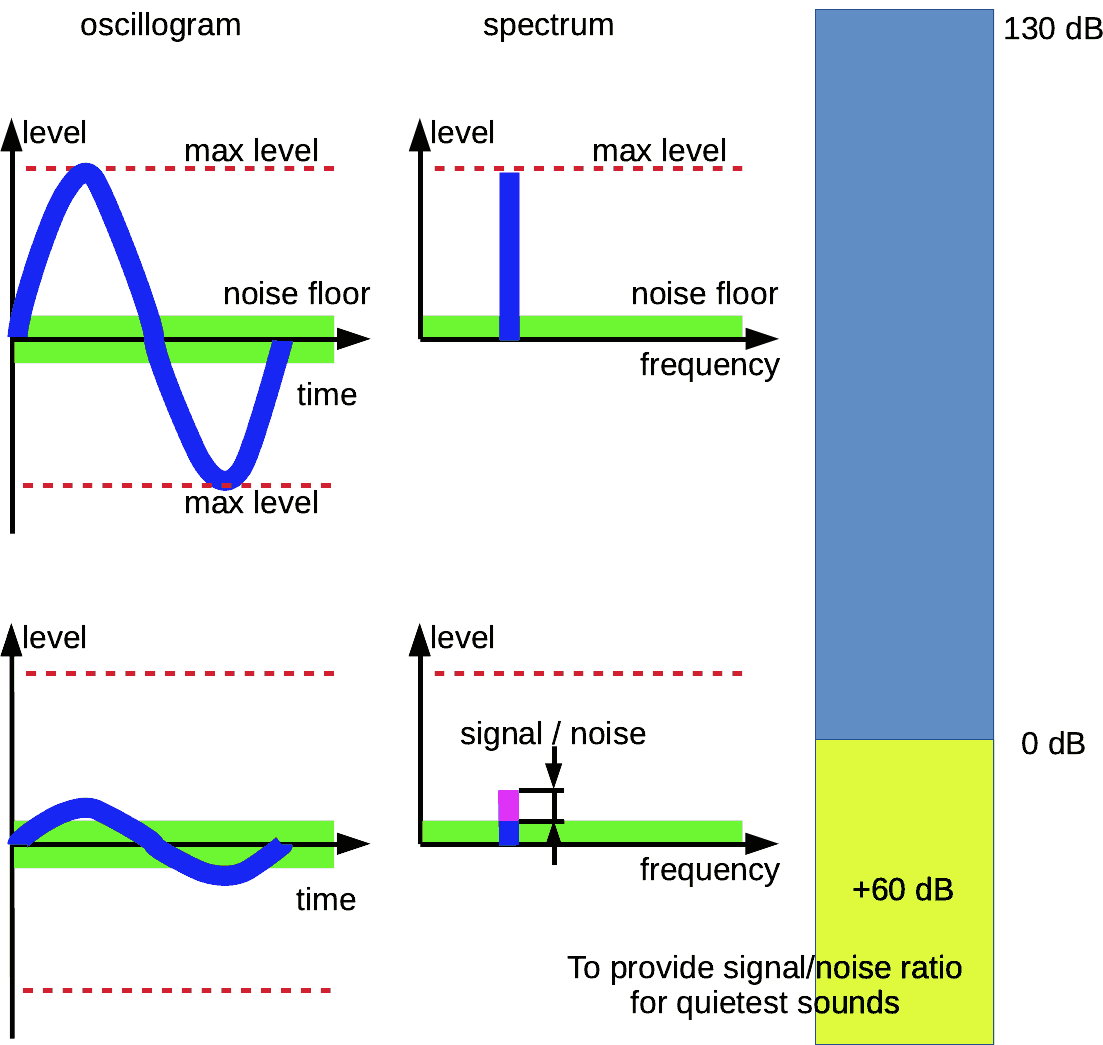

When bit depth is discussed, the human hearing range (about 130 dB from inaudible to pain) is taken as the reference value.

16-bit resolution has a difference of about 96 ... 110 dB between the lowest (noise floor) and highest peak levels.

It looks like enough to cover almost the full 130 dB range.

However, the range doesn't consider accuracy aspects.

A low hearing threshold is 0 dB. It's a quiet rustle of leaves, as an example. Such signal has its own signal/noise ratio too.

As an example, we want to provide proper listening grade (like tape's 60 dB) at 0 dB audible level.

So we can add about 60 dB to 130 dB (total of about 180 dB) to provide allowable audibility at the lowest levels.

Why is depth higher than 16-bit need?

Look at the signal/noise ratio of the lowest loudness (quiet instruments)

It is not exact numbers, because the author doesn't have a result of researches. But we can get it as the hypothesis.

So sample depths, higher, than 16 bit, may be needed.

DSD solves the dynamic range issue via noise shaping.

EXAMPLE

ISSUE:

Let's low levels of a song have a bad (low) signal/noise ratio.

We want to improve the listening experience of these piece places. And we boost volume control.

And we begin to listen to these places better way. But noise is boosted together with music. And it degrades the sounding.

SOLUTION:

To reduce the noise in quiet places of the piece, we should reduce noise level there. If bit depth is bottle-neck, we can do nothing.

But bit depth reserve gives us the ability to boost of record level:

- without noise level increasing (if DAC is noise bottle-neck); or

- signal noise of the quiet places is good enough even for higher loudness.

- Read details about noise shaping >

- More about the hearing range here

Bit depth requirements

For different purposes, various bit depths are used:

Speech, lo-fi music - 8 bit

Hi-Fi, Hi-End CD, DVD-video, downloaded audio - 16 bit

Hi-Fi, Hi-End DVD-audio and video, Blue-Ray, downloaded audio - 24 bit

Studio recording and mixing (music production) - 24 or 32 bit and 32 or 64 bit float

For high precision applications - 64 bit integer or floating point

Back to topBit-depth video

Each pixel (point of monitor or TV screen) has 3 colours.

Each colour has level range of brightness 0 to maximum, that is divided on number levels.

<bit-depth> is number bits in binary number that matches maximum level.

Example:

In digital scale for green component of one of TV screen points:

Minimum brighthess is 0.

Maximum brighthess is 255.

255 as a binary number is 11111111. It's 8 bits.

Bit can contain 1 or 0. There are 256 combinations of ones and zeroes for range 0 ... 255.

So, bit depth here is 8 bit.

Back to top

Frequently Asked Questions

What does 32-bit depth mean?

32-bit depth means that each sample contains 32 bits.

Sample contains information about 1 audio channel. For stereo digital audio signal 1 sample of left channel and 1 sample of right one are united to a frame.

1 second of sounding contains number of frames, that is equal sample rate.

Back to top

Read more

Back to top